The Googlebot crawl limit has sparked concern among site owners who worry their content might not be fully processed. The 2 MB threshold refers specifically to the amount of raw HTML that Googlebot downloads during crawling. If a page exceeds that size, Googlebot may stop reading additional HTML beyond the limit.

This does not mean your entire page disappears from Google Search. It simply means that content beyond the 2 MB point in the HTML file may not be processed during crawling. For most web pages, this scenario rarely happens.

Understanding this distinction matters. The crawl limit effect on indexing only becomes an issue when critical content appears after that 2 MB cutoff. In most cases, well-structured pages keep essential content near the top of the HTML, reducing any real SEO impact.

How Googlebot Handles HTML During Crawling

Googlebot downloads the HTML first before rendering additional resources like JavaScript and CSS. The 2 MB cap applies to the raw HTML file, not images or external assets.

That means your visual page size can be large, yet your HTML size may still be small. Many modern web pages rely on external scripts and stylesheets, which do not count toward the initial crawl limit in the same way.

If your primary content loads within the first portion of HTML, indexing typically works as expected.

Why 2 MB Sounds Smaller Than It Actually Is

Two megabytes may sound restrictive, but in practice it is substantial. A typical HTML file size for SEO purposes often falls between 30 KB and 200 KB. Even large editorial or ecommerce pages frequently stay well under 1 MB of raw HTML.

Recent real-world web page size analysis shows that very few pages approach the 2 MB threshold. Pages would need extremely bloated code, massive inline scripts, or thousands of repeated elements to reach that level.

For most websites, this limit is generous.

Also Read: Google February 2026 Discover Core Update: Rollout, Key Changes & SEO Impact

What the Latest Data Reveals About Real-World HTML Size

Recent Googlebot 2 MB crawl limit data confirms that the vast majority of websites are not affected. Industry analysis shows that most HTML documents are significantly smaller than the threshold.

Even content-heavy blogs, news sites, and service pages typically remain under the limit. This means the Googlebot crawl limit is not a widespread technical SEO problem.

The real concern is not size alone but structure. If your HTML is clean and logically organized, Googlebot processes it efficiently. That keeps your web pages eligible for proper indexing.

Findings from Industry Analysis

Data highlighted by Search Engine Journal indicates that only a small fraction of sampled pages exceed 2 MB in raw HTML. Those that do often contain excessive inline JavaScript or overly complex templates.

This reinforces an important point. Clean coding practices matter more than sheer page length.

When developers avoid unnecessary code duplication and keep templates streamlined, HTML size remains manageable.

Which Types of Sites Might Exceed the Limit

Certain large ecommerce category pages face higher risk. Pages with thousands of product listings embedded directly in HTML can grow quickly.

Other edge cases include:

- Auto-generated tag archives

- Forum pages with long comment threads

- Poorly optimized CMS templates

Still, these situations are exceptions rather than the rule.

Does the Crawl Limit Affect Indexing and Rankings?

The SEO impact of the 2 MB limit is often overstated. Google does not automatically penalize pages that approach the threshold.

However, if essential content appears after the 2 MB mark in the HTML file, it may not be crawled. That can influence indexing completeness.

In practical terms, the crawl limit effect on indexing depends on content placement, not total page weight.

When Truncation Can Become a Problem

If product descriptions, structured data, or internal links load late in the HTML, they could be missed if the file exceeds 2 MB.

This is rare but possible on poorly optimized sites. In those cases, important signals may not be processed, which could indirectly affect visibility.

The solution is not panic. It is prioritization of key content higher in the HTML structure.

Why Most Sites Experience No Ranking Issues

Most sites organize content logically. Headings, body copy, metadata, and schema typically appear early in the HTML document.

Because of this, even if a page were unusually large, Googlebot would still capture the most important signals.

That is why the Googlebot crawl limit rarely creates ranking problems in real-world scenarios.

How to Keep Your HTML Size SEO-Friendly

Managing HTML size for SEO is part of good technical hygiene. It improves crawl efficiency and overall web performance.

While most web pages are safe, reducing unnecessary code helps future-proof your site.

Think of it as preventive maintenance rather than emergency repair.

Simple Ways to Reduce HTML File Size

Here are practical steps:

- Minify HTML output

- Remove unused inline CSS or JavaScript

- Avoid excessive DOM nesting

- Paginate long lists instead of loading everything at once

- Clean up bloated CMS plugins

Small improvements can dramatically reduce HTML size without affecting design or usability.

When to Worry and When Not To

You should investigate if:

- Your raw HTML exceeds 1.5 MB

- Important content loads very late in the document

- Your CMS generates repetitive markup

If your HTML file is well under 1 MB, there is little reason for concern.

Also Read: Top 10 Free SEO Tools to Optimize Websites in 2026

What This Means for Your Technical SEO Strategy

Technical SEO is about efficiency. The Googlebot crawl limit is just one piece of a broader system that includes crawl budget, rendering, and indexing.

A well-optimized site supports both search engines and users.

Rather than obsessing over a hard limit, focus on structured content, fast loading speeds, and clean markup. That approach minimizes SEO impact while improving user experience.

Balancing Crawl Efficiency and User Experience

Fast, organized HTML benefits everyone. Search engines process it quickly. Users experience smoother performance.

Avoid loading massive content blocks at once. Break content into logical sections when necessary. Use pagination wisely.

This creates a cleaner structure that supports long-term growth.

Practical Next Steps for Site Owners

Start by checking your page source and measuring raw HTML size. Many browser tools allow you to inspect document weight directly.

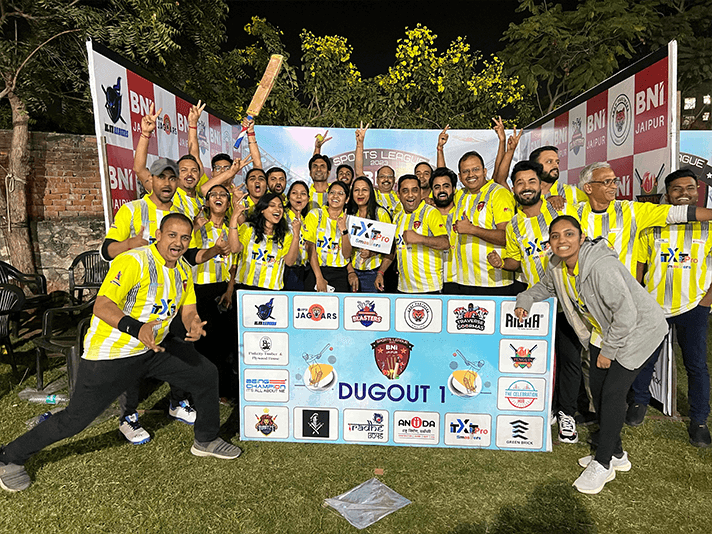

If you are unsure how to interpret the data, working with specialists helps. At ITXITPro, we usually recommend technical audits that evaluate HTML size, crawl behavior, and indexing patterns together.

The goal is clarity, not fear. For most sites, the Googlebot 2 MB crawl limit is simply not a problem.

FAQ

1. What is the Googlebot crawl limit?

The Googlebot crawl limit refers to the maximum amount of raw HTML Googlebot downloads per page during crawling. Currently, that threshold is 2 MB. Content beyond that point may not be processed, but this rarely affects most properly structured websites.

2. What does the 2 MB limit apply to exactly?

The limit applies only to raw HTML content. It does not include images, external CSS files, or separately loaded JavaScript resources. Googlebot reads the HTML first, and the 2 MB cap affects only that initial document download.

3. Is 2 MB a small HTML size for modern web pages?

No, 2 MB is relatively large for raw HTML. Most pages fall well below this threshold. Even feature-rich sites typically keep HTML size under 1 MB, making the limit generous for standard website structures.

4. How can I check my HTML file size?

You can view page source in your browser and inspect network requests using developer tools. Look at the document request size to see how large your HTML file is before additional resources load.

5. Does the crawl limit affect rankings directly?

There is no direct ranking penalty tied to exceeding 2 MB. Issues only arise if critical content is placed after the cutoff and is not crawled, which could indirectly influence indexing completeness.

6. What happens if my page exceeds 2 MB?

If your page exceeds 2 MB of raw HTML, Googlebot may stop processing additional content beyond that point. Important information placed late in the file might not be crawled or indexed fully.

7. Are ecommerce websites at higher risk?

Some ecommerce sites with large category pages may approach the limit, especially if thousands of products load at once. Proper pagination and template optimization usually prevent issues related to HTML size.

8. Does JavaScript count toward the 2 MB limit?

Inline JavaScript within the HTML document counts toward the limit. External JavaScript files do not count in the same way during the initial HTML crawl, since they are separate resources.

9. How does HTML size influence crawl budget?

Large HTML files can consume more crawl resources. While 2 MB is rarely an issue, cleaner and smaller documents improve crawl efficiency and may help search engines process more pages within your crawl budget.

10. Should I reduce page size even if I am under 2 MB?

Yes, keeping HTML lean supports better performance and crawl efficiency. Even if you are under 2 MB, optimizing structure and removing unnecessary code improves both user experience and long-term SEO stability.